Dec 15 2020

Nissan’s Quick Response Quality Control (QRQC)

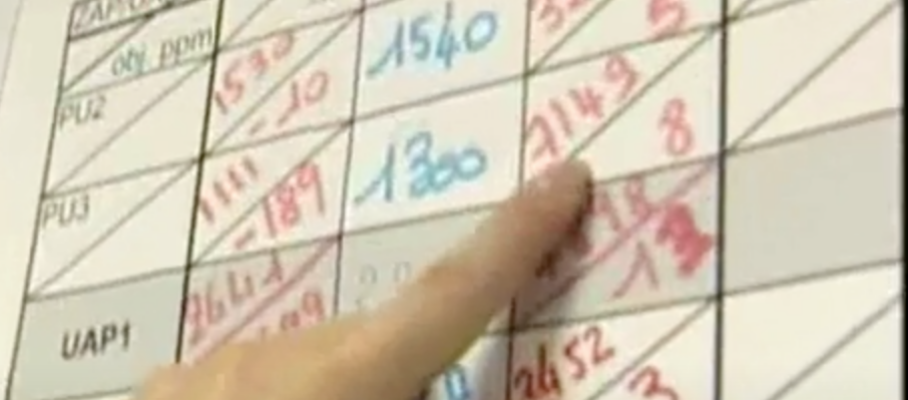

Nissan’s Quick Response Quality Control (QRQC) is a management approach. It’s about organizing the response to quality problems, not about the technical tools used to solve them. It is intended to help detect problems, solve them, and document solutions, thereby growing the skills of the workforce. QRQC neither mandates nor excludes mistake-proofing or any statistical/data science tool.

This is meant to introduce QRQC to those who have not heard of it but it is also a call for practitioners to correct any misperceptions, add details, or share their experience.

Mar 12 2021

Process capability

The literature on quality defines process capability as a metric that compares the variability of its output with tolerances. There are, in fact, two different concepts:

Contents

Share this:

Like this:

By Michel Baudin • Tools 6 • Tags: Cpk, First-pass Yield, Quality, Rolled Throughput Yield, Run-by-Run, SPC, Yield