Apr 3 2026

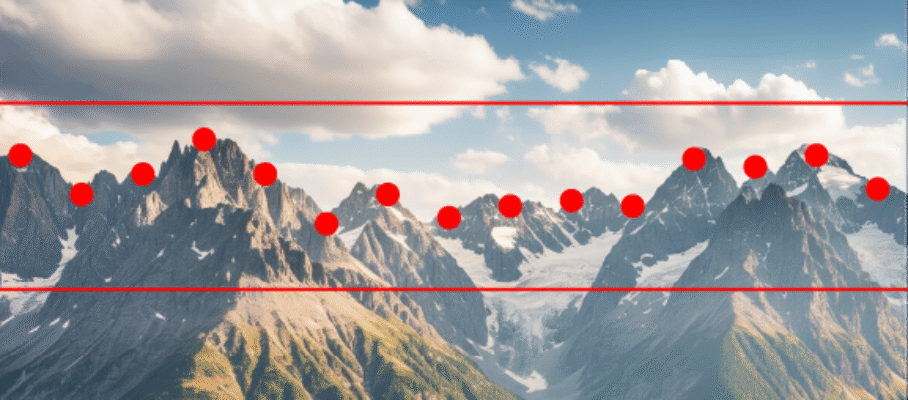

Deviating Standard Deviations

This basic concept deserves revisiting. The following is from a blog post from 2022 hosted by a supplier of statistical software intended to explain the meaning of some notations in plain, simple terms:

The author calls two different things by the same name. If the standard deviation of each variable is 1, how could its expected value be anything else? The confusion within this nonsensical statement is the same we make when we equate the temperature of a soup with a thermometer reading. In our mental model of a bowl of soup, it has a temperature that exists regardless of our ability to measure it, and the thermometer reading is only an estimate of it.

For the purposes of eating soup, confusing the two is harmless, unless the thermometer, poorly calibrated, always gives you an answer that is 15°F off. This is the situation we have with the most commonly used estimator of the standard deviation of a random variable from a small sample. It is biased, and c_4(n) is a correction factor applicable when the random variable is Gaussian.

To describe c_4(N) accurately, we need to dig into probability theory. It is, in fact, the expected value of the estimator S=\sqrt{\frac{1}{N-1}\sum_{i=1}^{N}\left ( X_i -\bar{X} \right )^2} of the standard deviation from a sample of N independent Gaussian variables \left ( X_1, \dots, X_N \right ) with unit standard deviation, \sigma = 1. This is an accurate statement, but every term in it needs an explanation.

Jun 2 2026

Some Hazardous Ideas | Don Wheeler | Quality Digest

On 5/28/2026, in QualityDigest, Donald J. Wheeler bashed “hazardous ideas” from a list he received from Allen Scott. Allen is a frequent contradictor of mine on LinkedIn, and I welcome his comments whenever they go beyond calling my work “garbage.” Although Wheeler does not name me as the originator or a propagator of these “hazardous ideas,” I’ll take the bait and respond about the ones I actually support.

The “hazardous ideas” are all about SPC, which, to Wheeler, boils down to XmR charts, relabeled “Process Behavior Charts.” SPC receives more attention than it should, given that it is used in practice almost exclusively for ceremonial purposes. The most dramatic quality problems of the past 25 years were tread separation on Firestone tires mounted on Ford Explorers in 2001, Toyota’s unintended acceleration in 2010, and Boeing 737 MAX crashes in 2019. None of them was related to or solved with SPC.

Google’s ngram viewer shows the relative frequency of quality-related key phrases in English-language books peaking in the 1980s and 90s and waning since. “Quality Control” is now down to its 1940 level. All the other phrases followed similar trajectories, peaking later, the latest one being “Six Sigma” in the late 2000s. Oddly, the only one with any uptick since 2010 is “SPC,” and it dominates all the others.

The overall decline in interest in the field is worrisome, given that there is no evidence that the quality of manufactured goods has been improving since the turn of the century. The JP Power and Associates Initial Quality Surveys of car brands show massive increases in customer complaints, and the life of household appliances has been getting shorter. If the quality of manufactured goods is deteriorating, we should see more publications on fixing it rather than fewer, and they should focus on approaches that address today’s problems with today’s technology.

So, to contribute further to the excessive attention to SPC, let’s run through some of the ideas Wheeler deems hazardous.

Share this:

Like this:

By Michel Baudin • Blog reviews 2 • Tags: Quality, Shewhart, SPC, Wheeler