Mar 5 2024

Process Control and Gaussians

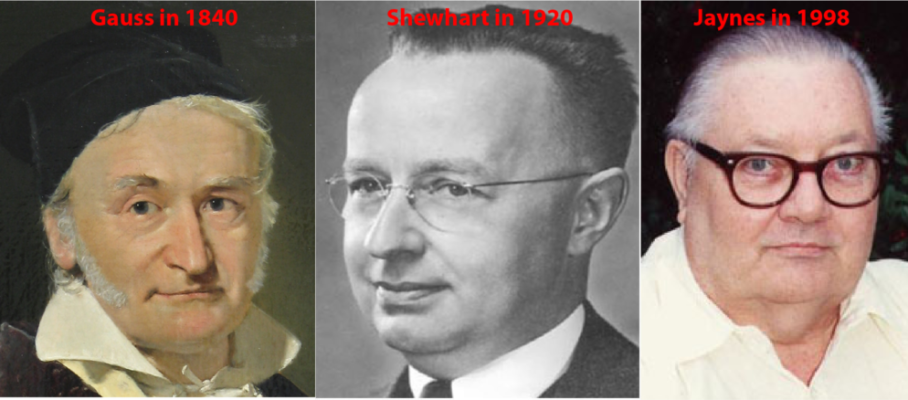

The statistical quality profession has a love/hate relationship with the Gaussian distribution. In SPC, it treats it like an embarrassing spouse. It uses the Gaussian distribution as the basis for all its control limits while claiming it doesn’t matter. In 2024, what role, if any, should this distribution play in the setting of action limits for quality characteristics?

Apr 24 2024

When Not to Connect the Dots

When plotting a sequence of points, should we connect the dots into a line? We usually do, but it shouldn’t be a foregone conclusion. Every chart element should have a clear and precise meaning: if we can’t explain what it means or it is ambiguous, it confuses readers and we should omit it.

The bulk of the SPC literature shows Control Charts as broken-line graphs. 100 years ago, Walter Shewhart, the inventor of these charts, plotted separate points instead. He did not explain why, so it’s on us to try and figure out what may have been his reasons.

Share this:

Like this:

By Michel Baudin • Data science 1