Dec 27 2011

Is SPC obsolete?

In the broadest sense, Statistical Process Control (SPC) is the application of statistical tools to characteristics of materials in order to achieve and maintain process capability. In this broad sense, you couldn’t say that it is obsolete, but common usage is more restrictive.

The semiconductor process engineers who apply statistical design of experiments (DOE) to the same goals don’t describe what they do as SPC. When manufacturing professionals talk about SPC, they usually mean Control Charts, Histograms, Scatter Plots, and other techniques dating back from the 1920s to World War II, and this body of knowledge in the 21st century is definitely obsolete.

Tools like Control Charts or Binomial Probability Paper have impressive theoretical foundations and are designed to work around the information technology of the 1920s. Data was recorded on paper spreadsheets, you looked up statistical parameters in books of tables, and computed with slide rules, adding machines or, in some parts of Asia, abacuses (See Figure 1).

In Control Charts, for example, using ranges instead of standard deviations was a way to simplify calculations. These clever tricks addressed issues we no longer have.

Figure 1. Information technology in the 1920s

Another consideration is the manufacturing technology for which process capability needs to be achieved. Shewhart developed control charts at Western Electric, AT&T’s manufacturing arm and the high technology of the 1920s.

The number of critical parameters and the tolerance requirements of their products have no common measure with those of their descendants in 21st century electronics.

For integrated circuits in particular, the key parameters cannot be measured until testing at the end of a process that takes weeks and hundreds of operations, and the root causes of problems are often more complex interactions between features built at multiple operations than can be understood with the tools of SPC.

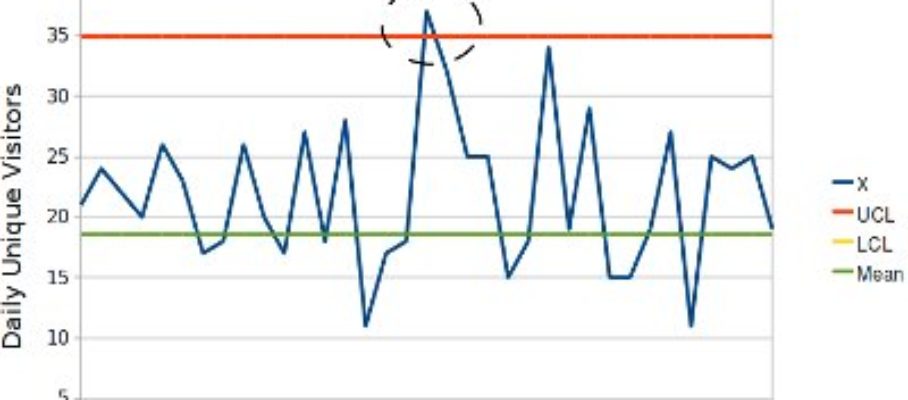

In addition, the quantity of data generated is much larger than anything the SPC techniques were meant to handle. If you capture 140 parameters per chip, on 400 chips/wafer and 500 wafers/day, that is 28,000,000 measurements per day. SPC dealt with a trickle of data; in current electronics manufacturing, it comes out of a fire hose, and this is still nothing compared to the daily terabytes generated in e-commerce or internet search (See Figure 2).

Figure 2. Data, from trickle to flood, 1920 to 2011

Figure 2. Data, from trickle to flood, 1920 to 2011

What about mature industries? SPC is a form of supervisory control. It is not about telling machines what to do and making sure they do it, but about checking that the output is as expected, detecting deviations or drifts, and triggering human intervention before these anomalies have a chance to damage products.

Since the 1920s, however, lower-level controls embedded in the machines have improved enough to make control charts redundant. The SPC literature recommends measurements over go/no-go checking, because measurements provide richer information, but the tables are turned once process capability is no longer the issue.

The quality problems in machining or fabrication today are generated by discrete events like tool breakage or human error, including picking wrong parts, mistyping machine settings or selecting the wrong process program. The challenge is to detect these incidents and react promptly, and, for this purpose, go/no-go checking with special-purpose gauges is faster and better than taking measurements.

In a nutshell, SPC is yesterday’s statistical technology to solve the problems of yesterday’s manufacturing. It doesn’t have the power to address the problems of today’s high technlogy, and it is unnecessary in mature industries. The reason it is not completely dead is that it has found its way into standards that customers impose on their suppliers, even when they don’t comply themselves. This is why you still see Control Charts posted on hallway walls in so many plants.

But SPC has left a legacy. In many ways, Six Sigma is SPC 2.0. It has the same goals, with more modern tools and a different implementation approach to address the challenge of bringing statistical thinking to the shop floor.

That TV journalists describe all changes as “significant” reveals how far the vocabulary of statistics has spread; that they use it without qualifiers shows that they don’t know what it means. They might argue that levels of significance would take too long to explain in a newscast, but, if that were the concern, they could save air time by just saying “change.” In fact, they are just using the word to add weight to make the change sound more, well, significant.

In factories, the promoters of SPC, over decades, have not succeeded in getting basic statistical concepts understood in factories. Even in plants that claimed to practice “standard SPC,” I have seen technicians arbitrarily picking parts here and there in a bin and describing it as “random sampling.”

When asking why Shewhart used averages rather than individual measurements on X-bar charts, I have yet to hear anyone answer that averages follow a Bell-shaped distribution even when individual measurements don’t. I have also seen software “solutions” that checked individual measurements against control limits set for averages…

I believe the Black Belt concept in Six Sigma was intended as a solution to this problem. The idea was to give solid statistical training to 1% of the work force and let them be a resource for the remaining 99%.

The Black Belts were not expected to be statisticians at the level of academic specialists, but process engineers with enough knowledge of modern statistics to be effective in achieving process capability where it is a challenge.

Jan 3 2012

Omissions on the ASQ’s History-of-Quality web page

Going backwards in time, the ASQ website’ page on the history of quality ignores Lean Quality in the late 20th century, interchangeable parts technology in the 19th, and the origin of the concept of “quality” in ancient Rome.

Specifically:

The first omission is critical because Lean Quality is the state of the art in quality management. The second is mind boggling: how could a history of quality skip over a massive, decade-long and eventually successful undertaking that was targeting the elimination of variability? The third is a detail.

Share this:

Like this:

By Michel Baudin • History 1 • Tags: Lean, Quality